Table_ref (more info in docs here) references the dataset set table, in this case, hacker_news.stories. The extract_table method takes three parameters: To export data from BigQuery, the Google BigQuery API uses extract_table, which we’ll use here (you can find more info about this method in the docs). Now it’s time to extract and export our sample (or real, in your case) dataset. ✅ Import the BigQuery client library from Google Cloud ✅ Install the Google Cloud API client library ✅ Create a new project in our code editor

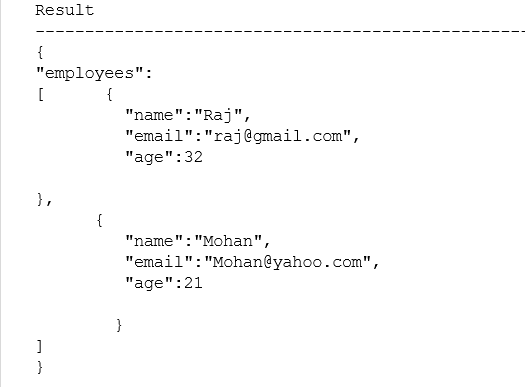

✅ (Optional) Browse public BigQuery datasets and choose HackerNews to create a new project with that data ✅ Name the bucket and ensure default settings are set so you’ll have a CSV to download later ✅ Create a bucket in Google Cloud Storage ✅ Create credentials to access the Google BigQuery API (and save to your local storage) ✅ Select the needed roles and permissions (BigQuery User and Owner) Here’s a summary of what we’ve by the end of this step: ```CODE language-python``` client = _service_account_json(SERVICE_ACCOUNT_JSON) bucket_name = ‘extracted_dataset’ project = “bigquery-public-data” Dataset_id = “hacker_news” Table_id = “stories” ] project: name of the specific project working on in BigQuery.bucket_name: name of the cloud storage bucket.To connect the Python BigQuery client to the public dataset, the “stories” table within our “hacker_news” dataset, we’ll need to set multiple variables first: Head on over to the Google Cloud console, go to IAM & Admin, and select service accounts. To start out, you’ll need to create a Google Cloud service account if you don’t already have one. OK, let’s get cooking with Google BigQuery. For details see the related documentation. If you’re new to BigQuery, check out this documentation. I’ll also cover a couple of alternative export methods in case this isn’t your jam.īefore we get too far into things, you'll need the following: In this tutorial, I’ll break down how to create a Google Cloud service account, create a bucket in cloud storage, select your dataset to query data, create a new project, extract and export your dataset, and download a CSV file of that data from Google Cloud Storage. BigQuery is a great tool whether you’re looking to build an ETL pipeline or combine multiple data sets, or even transform the data and move it into another warehouse. Maybe you’re working on data migration to another warehouse like Amazon Redshift, or maybe you want to clean and query your data after transformation.Įither way, I got you. So you want to extract data from Google BigQuery. Specifically, we'll download a CSV of our data from Google Cloud Storage, without cloud storage, and with a reverse ETL tool. In this article, you'll learn how to export data from the Google BigQuery API with Python.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed